Tickmill is the trading name of Tickmill Group of companies.

Tickmill.com is owned and operated within the Tickmill Group of companies. Tickmill Group consists of Tickmill UK Ltd, regulated by the Financial Conduct Authority (Registered Office: 3rd Floor, 27 - 32 Old Jewry, London EC2R 8DQ, England) and the Dubai Financial Services Authority as a Representative Office (Reference No. F007663), Tickmill Europe Ltd, regulated by the Cyprus Securities and Exchange Commission (Registered Office: Kedron 9, Mesa Geitonia, 4004 Limassol, Cyprus), Tickmill South Africa (Pty) Ltd, FSP 49464, regulated by the Financial Sector Conduct Authority (FSCA) (Registered Office: Office 11, 140 West Street, Sandton, Gauteng, 2196, South Africa), Tickmill Ltd, Address: 3, F28-F29 Eden Plaza, Eden Island, Mahe, Seychelles regulated by the Financial Services Authority of Seychelles and its 100% owned subsidiary Procard Global Ltd, UK registration number 09369927 (Registered Office: 3rd Floor, 27 - 32 Old Jewry, London EC2R 8DQ, England), Tickmill Asia Ltd - regulated by the Financial Services Authority of Labuan Malaysia (License Number: MB/18/0028 and Registered Office: Unit B, Lot 49, 1st Floor, Block F, Lazenda Warehouse 3, Jalan Ranca-Ranca, 87000 F.T. Labuan, Malaysia).

Clients must be at least 18 years old to use the services of Tickmill.

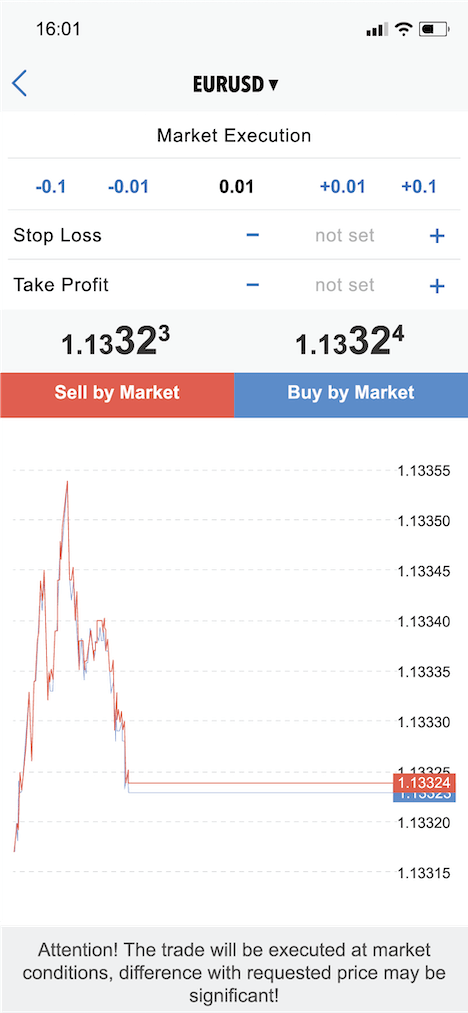

Risk Warning: Trading Contracts for Difference (CFDs) on margin carries a high level of risk and may not be suitable for all investors. Before deciding to trade Contracts for Difference (CFDs), you should carefully consider your trading objectives, level of experience and risk appetite. It is possible for you to sustain losses that exceed your invested capital and therefore you should not deposit money that you cannot afford to lose. Please ensure you fully understand the risks and take appropriate care to manage your risk.

The site contains links to websites controlled or offered by third parties. Tickmill has not reviewed and hereby disclaims responsibility for any information or materials posted at any of the sites linked to this site. By creating a link to a third party website, Tickmill does not endorse or recommend any products or services offered on that website. The information contained on this site is intended for information purposes only. Therefore, it should not be regarded as an offer or solicitation to any person in any jurisdiction in which such an offer or solicitation is not authorised or to any person to whom it would be unlawful to make such an offer or solicitation, nor regarded as recommendation to buy, sell or otherwise deal with any particular currency or precious metal trade. If you are not sure about your local currency and spot metals trading regulations, then you should leave this site immediately.

You are strongly advised to obtain independent financial, legal and tax advice before proceeding with any currency or spot metals trade. Nothing in this site should be read or construed as constituting advice on the part of Tickmill or any of its affiliates, directors, officers or employees.

The services of Tickmill and the information on this site are not directed at citizens/residents of the United States, and are not intended for distribution to, or use by, any person in any country or jurisdiction where such distribution or use would be contrary to local law or regulation.